What to Fix First in Data Center Environment Control

When uptime, thermal stability, and asset protection are on the line, prioritizing the right fixes matters. In Environment Control in data centers, project managers and engineering leads must first address the factors that most directly impact reliability, energy efficiency, and equipment lifespan. This guide outlines where to focus first to reduce operational risk, improve system performance, and support scalable infrastructure decisions.

What should be fixed first in Environment Control in data centers?

For most facilities, the first priority is not cosmetic improvement or isolated component replacement. The first fixes in Environment Control in data centers should target conditions that can trigger immediate downtime, hidden thermal stress, sensor inaccuracy, and uneven airflow. Project managers are often asked to improve resilience under tight budgets and compressed delivery schedules, so the correct sequence matters as much as the technical solution itself.

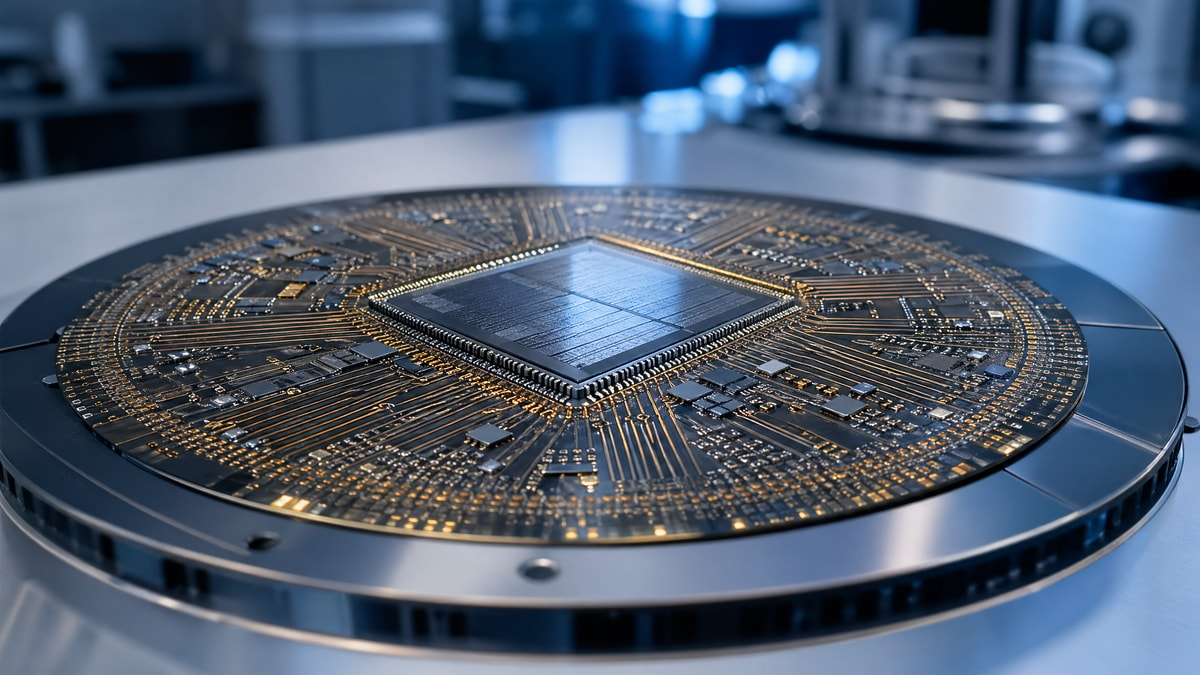

In practical terms, start with the issues that affect the whole room before the issues that affect a single device. If the cooling path is unstable, if humidity drifts beyond acceptable bands, or if monitoring points are sparse or badly placed, any downstream equipment upgrade will underperform. This is especially relevant for organizations handling semiconductor-related workloads, industrial automation data, or precision sensing systems, where thermal fluctuation and contamination can undermine data fidelity and equipment reliability.

- Stabilize inlet temperature consistency before replacing otherwise functional cooling units.

- Correct humidity control before investigating isolated static discharge or condensation complaints.

- Fix airflow management gaps such as bypass air, recirculation, and underfloor leakage before increasing cooling capacity.

- Validate sensor placement and calibration before relying on dashboards for capital decisions.

- Review alarm thresholds, response workflows, and redundancy logic before expanding rack density.

The highest-risk starting points

The most urgent fixes usually fall into four categories: thermal hotspots, uncontrolled humidity swings, poor air containment, and weak environmental visibility. These conditions do not simply reduce efficiency. They can distort maintenance planning, shorten hardware life, and create false confidence in system stability. In high-value digital infrastructure, especially where precision electronics and sensor-driven operations matter, these are not secondary issues.

The table below helps project teams prioritize Environment Control in data centers based on risk, business impact, and implementation urgency rather than on vendor preference or single-equipment marketing claims.

A key takeaway is that more cooling is not always the right first fix. Many projects overspend on extra capacity while leaving recirculation, poor sensor logic, or bad zoning untouched. That approach inflates capital expense and still leaves the same reliability problem in place.

Why airflow management usually comes before equipment replacement

In Environment Control in data centers, airflow is the delivery mechanism for every cooling strategy. If cold air does not reach server inlets predictably, or if hot exhaust is allowed to loop back into intake paths, the room can show acceptable average conditions while individual racks operate well beyond safe thermal margins. This is one of the most common reasons project teams face recurring hotspots after upgrading cooling units.

Common airflow faults that deserve immediate attention

- Open rack spaces without blanking panels, allowing hot exhaust recirculation.

- Cable cutouts and floor penetrations left unsealed, reducing static pressure under raised floors.

- Poor supply tile placement that sends cold air to low-load zones while dense racks starve.

- Lack of aisle containment in mixed-density layouts with changing load profiles.

- Cooling unit discharge and return paths positioned in ways that short-cycle air.

Why project managers should care

Airflow corrections are often among the fastest interventions with measurable return. They usually involve lower disruption than replacing chillers, CRAC units, or precision air handlers. For engineering leads, this means fewer schedule risks, easier change windows, and clearer before-and-after validation. For procurement teams, it means that budget can be redirected toward higher-value instrumentation or future density upgrades rather than unnecessary oversizing.

How to prioritize humidity, temperature, and contamination control

Temperature gets the most attention, but Environment Control in data centers also depends on humidity stability and particulate awareness. For facilities serving semiconductor-adjacent operations, test infrastructure, edge analytics, or industrial sensor networks, stable environmental conditions are essential because electronic accuracy and long-term reliability can degrade before a full failure event occurs.

G-SSI’s cross-disciplinary perspective is valuable here. Data center environment decisions increasingly intersect with thermal management practices familiar to semiconductor fabrication, advanced packaging, industrial-grade MEMS deployment, and high-purity infrastructure planning. That does not mean every data room needs fab-level controls. It means project teams should evaluate whether their load profile, mission criticality, and data sensitivity demand tighter environmental governance than a generic facility baseline.

The following table provides a practical screening framework for Environment Control in data centers when teams must decide what to fix first and how strict the control band should be.

This framework helps teams avoid treating every environmental variable as equally urgent. Temperature uniformity and pressure integrity often come first. Humidity follows closely, especially in regions with large seasonal variation or facilities that cycle load sharply. Particulate control becomes more critical when infrastructure sits near manufacturing, logistics, or polluted outdoor air sources.

Which monitoring gaps create the biggest blind spots?

Many projects struggle because they are managing Environment Control in data centers with too little localized data. A single room sensor cannot represent conditions across diverse rack densities, changing airflow patterns, and mixed equipment generations. Teams may see green status on the building dashboard while a dense compute row experiences chronic high inlet temperatures or oscillating humidity near cooling discharge zones.

Minimum monitoring improvements worth funding first

- Install rack-level temperature sensing at multiple vertical points for high-load cabinets.

- Review whether humidity sensors reflect actual room behavior or only return-air conditions.

- Correlate alarms with physical layout so technicians know exactly which aisle, row, or rack zone is affected.

- Check sensor calibration intervals and maintenance records before using historical trends for redesign decisions.

- Link environmental data to load changes, maintenance events, and control sequence adjustments.

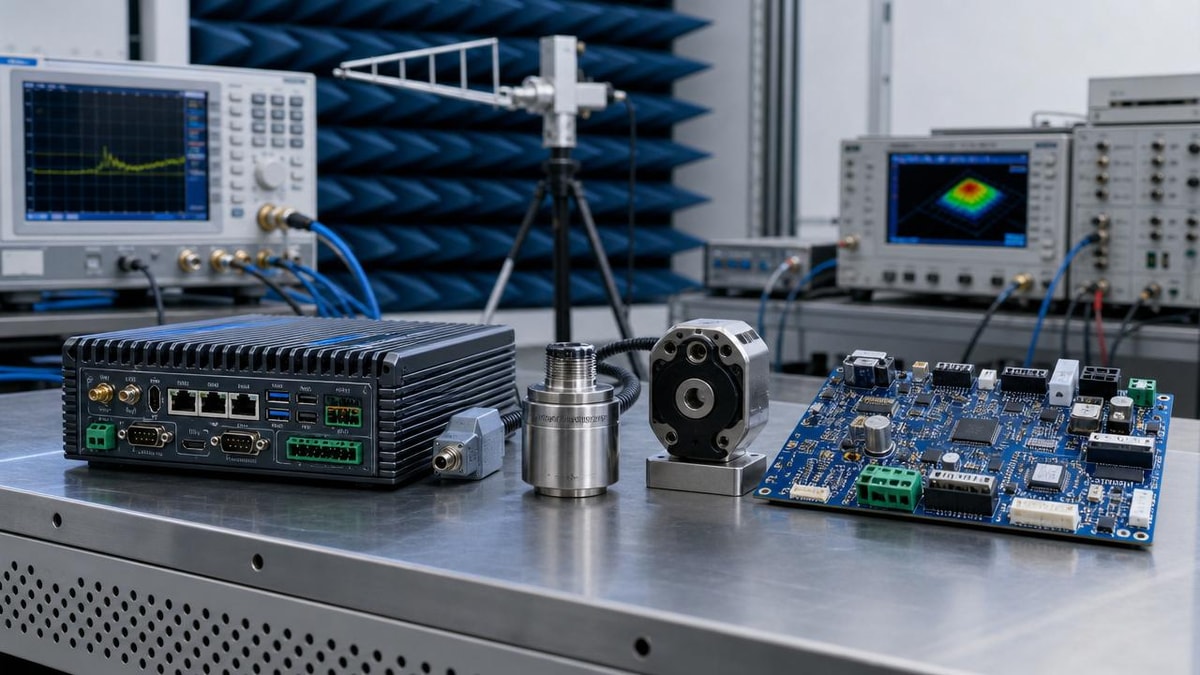

For project leaders, better monitoring is not only an operational upgrade. It is also a procurement protection measure. It prevents buying the wrong capacity, the wrong containment type, or the wrong control architecture. In facilities supporting high-value electronics, precision test equipment, or industrial sensing ecosystems, this level of environmental visibility can materially improve lifecycle decisions.

Procurement and selection: what should engineering leads evaluate before spending?

When budget is limited, the best Environment Control in data centers strategy is usually phased. Do not begin with a product list. Begin with decision criteria. Project managers need a framework that balances reliability, implementation complexity, compliance expectations, and future expansion. This is where a benchmarking-oriented partner adds value by translating environmental problems into measurable selection logic.

A practical decision sequence

- Define the operational consequence of environmental failure: performance drop, data risk, component damage, or service outage.

- Separate room-level deficiencies from rack-level deficiencies so the scope matches the root cause.

- Evaluate whether the issue is capacity, distribution, control logic, or monitoring accuracy.

- Check whether current systems can be optimized before replacement.

- Screen all options against installation downtime, maintenance burden, and scalability.

Comparison: optimize first or replace first?

The table below compares two common approaches to Environment Control in data centers. It is designed for engineering teams that need to justify budgets and timelines to management or cross-functional stakeholders.

In many cases, the hybrid path is the most defensible. It allows quick fixes with immediate impact while reserving capital for equipment that genuinely needs replacement. This approach is especially useful when data center infrastructure supports semiconductor development workflows, industrial IoT platforms, or precision sensing environments where future density and tighter environmental tolerance are likely.

Standards, compliance, and why benchmarking matters

Environment Control in data centers should not be managed solely through rules of thumb. Project teams benefit from aligning decisions with recognized technical frameworks and measurement discipline. Depending on the facility, this may include widely referenced data center environmental guidance, internal reliability thresholds, calibration discipline, and control validation practices. In specialized sectors, broader awareness of standards culture from SEMI, ISO/IEC 17025, and reliability-focused qualification thinking can improve decision quality, even when those standards do not apply directly to the room itself.

This is where G-SSI offers a distinct advantage. Its focus on semiconductor fabrication environment control, advanced packaging, industrial-grade sensing, and high-purity process expectations brings a higher-resolution view of thermal management and data integrity. For project managers, that means environmental decisions can be benchmarked not only for basic facility uptime, but also for long-term reliability where precise electronics and sensitive infrastructure are involved.

Common mistakes that delay results

Mistake 1: Treating average room temperature as proof of control

Average conditions can hide severe local deviations. Always inspect rack inlets, aisle transitions, and high-density clusters before concluding that thermal control is acceptable.

Mistake 2: Buying extra cooling before fixing airflow leaks

This is a frequent source of wasted capital. If supply air is escaping or mixing incorrectly, more cooling simply feeds the same inefficiency.

Mistake 3: Ignoring control logic conflicts

Multiple units with overlapping setpoints can work against each other. The result is unstable humidity, unnecessary compressor activity, and inconsistent room behavior.

Mistake 4: Underestimating the value of calibrated sensing

Bad data creates bad upgrades. Before approving redesigns, confirm that environmental measurements are representative, current, and traceable within your maintenance practice.

FAQ: real decision questions from project managers

How do I know whether my first fix should be airflow or cooling capacity?

Compare total installed cooling capacity with actual load and then inspect rack-level temperature distribution. If capacity appears sufficient but hotspots remain localized, airflow is usually the first fix. If temperatures rise broadly across the room during predictable load periods and all airflow corrections have already been addressed, capacity may be the true constraint.

What level of monitoring is enough for Environment Control in data centers?

At minimum, critical racks should have multi-point inlet temperature visibility, and humidity sensing should reflect actual occupied zones rather than a single return-air location. More complex sites may also need differential pressure, filter condition tracking, and event correlation between IT load and environmental response.

Is stricter environmental control always better?

No. Tighter control bands can increase complexity and energy use if they are not justified by the workload, hardware sensitivity, or compliance requirement. The right target is stable, appropriate control based on mission criticality and asset sensitivity, not unnecessarily aggressive settings.

How should I phase upgrades when downtime windows are limited?

Start with non-invasive diagnostics, sensor validation, containment corrections, and leakage sealing. Next, optimize setpoints and control sequencing. Replace or expand major equipment only after those steps clarify the remaining gap. This phased model reduces project risk and supports clearer investment justification.

Why choose us for environment control assessment and planning?

G-SSI supports project managers and engineering leads who need more than general advice on Environment Control in data centers. Our strength lies in benchmarking environmental control decisions against the reliability demands of semiconductor-related infrastructure, industrial-grade sensing ecosystems, thermal management requirements, and data fidelity expectations. That perspective helps teams avoid generic fixes that look acceptable on paper but underperform in critical operations.

You can contact us to discuss practical project needs, including parameter confirmation for temperature and humidity control, solution selection for airflow optimization and monitoring architecture, delivery planning for phased implementation, customized evaluation for high-density or sensitive electronics environments, certification and standards alignment considerations, sample or pilot-scope support for monitoring layouts, and quotation discussions tied to project schedule and risk level.

If your team is deciding what to fix first, bring the real constraints: rack density, failure history, control instability, expansion plans, and compliance expectations. With the right baseline data and a disciplined prioritization path, Environment Control in data centers becomes a manageable engineering decision rather than a recurring emergency.

Get weekly intelligence in your inbox.

No noise. No sponsored content. Pure intelligence.