Autonomous Systems OEM Selection: Common Risks and Red Flags

Selecting an Autonomous Systems OEM is no longer a routine sourcing task but a strategic risk decision for business evaluators. In markets shaped by semiconductor integrity, sensor accuracy, and supply chain resilience, early warning signs can hide behind polished claims and incomplete certifications. This article highlights the most common risks and red flags to help decision-makers screen partners with greater confidence, technical clarity, and long-term commercial discipline.

Why Scenario Differences Matter When Evaluating an Autonomous Systems OEM

For a business evaluator, the phrase Autonomous Systems OEM can cover very different commercial realities. A supplier suitable for warehouse robotics may be a poor fit for off-highway vehicles, smart industrial inspection platforms, or power-sensitive edge infrastructure. The risk profile changes with operating temperature, mission duration, sensor stack complexity, and required safety validation. In many projects, the first failure is not technical execution but selecting an OEM whose strengths belong to another scenario.

This matters even more in environments influenced by semiconductor availability and sensory reliability. In autonomous systems, a 6-month supply disruption in a mature-node MCU, a 2% drift in inertial sensing, or an undocumented change in power module packaging can alter system behavior, cost forecasting, and field maintenance plans. Business evaluators therefore need to move beyond price sheets and pilot demos toward scenario-based qualification.

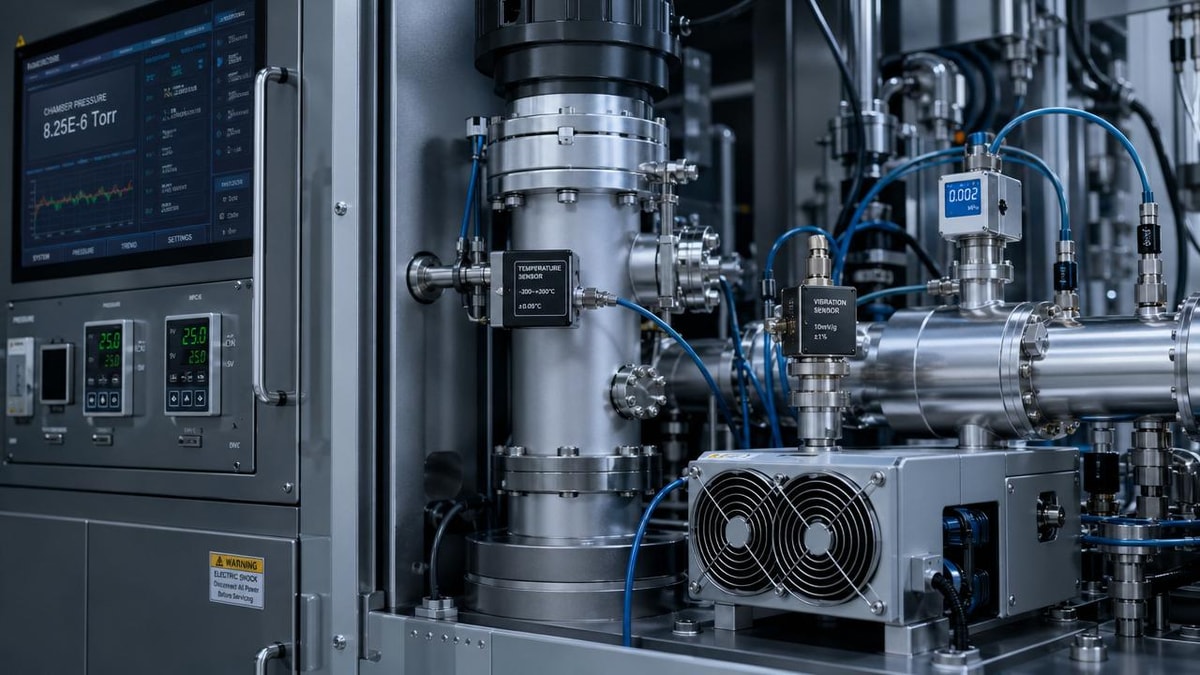

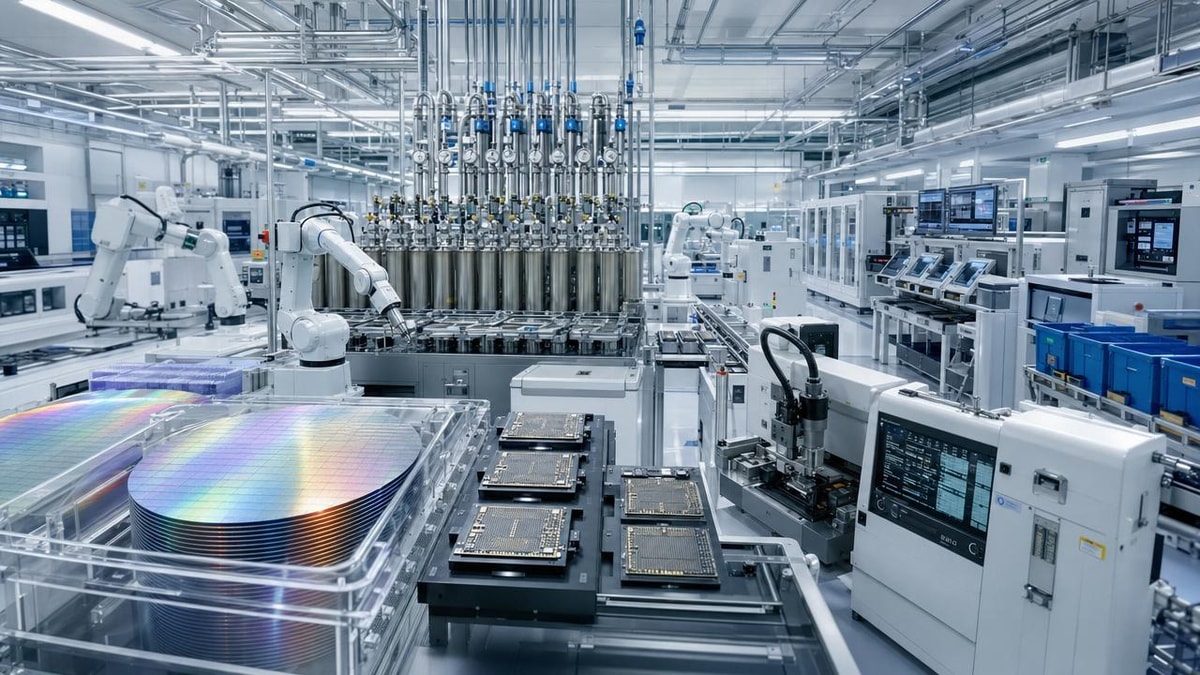

From the perspective of G-SSI’s industrial focus, the highest-risk blind spots often emerge where silicon performance, packaging quality, environmental control, and sensor calibration intersect. That is why screening an Autonomous Systems OEM should include fabrication resilience, test traceability, thermal margins, and component substitution discipline, especially when product lifecycles extend 3 to 7 years or more.

A practical scenario lens for business evaluators

Instead of asking whether an OEM is “advanced,” ask whether it is advanced for your operating scenario. A port logistics vehicle operating 18 to 20 hours per day has different risk tolerance than a campus delivery robot running under controlled speeds. Likewise, an industrial inspection drone exposed to vibration, dust, and intermittent connectivity requires a different component assurance model than an indoor mobile manipulator.

- Mission-critical outdoor systems usually demand stronger thermal design, ingress protection discipline, and replacement-part continuity.

- High-duty industrial systems depend more heavily on stable power semiconductors, connector reliability, and preventive diagnostics.

- Mixed-fleet or export-oriented deployments require clearer compliance mapping, firmware governance, and multi-source component planning.

A sound Autonomous Systems OEM evaluation framework should therefore begin with scenario segmentation, not vendor presentation quality. This one change often reduces avoidable sourcing mistakes within the first 30 to 45 days of qualification.

Typical Application Scenarios and Where Red Flags Usually Appear

Most procurement failures happen because decision-makers apply a generic checklist to highly specific applications. The table below compares common scenarios where an Autonomous Systems OEM may be considered and highlights what business evaluators should treat as early warning signals before RFQ finalization.

The main takeaway is that red flags are scenario-specific. A weak field-service plan may be manageable in a pilot lab, but unacceptable in a 100-unit logistics deployment. Similarly, incomplete calibration records may not surface during a demonstration, yet become costly when industrial inspection quality depends on repeatable sensor data over several quarters.

Scenario 1: Warehouse and intralogistics systems

In warehouse automation, buyers often focus on navigation software and throughput, but the hidden risk is supportability at scale. If an Autonomous Systems OEM cannot clearly specify spare-parts stocking windows, battery cycle assumptions, or controller replacement lead times, the business case can deteriorate after the first 50 to 100 units. Indoor systems are sometimes assumed to be low risk, yet fleet-level downtime can become expensive very quickly.

A red flag in this scenario is vague language around sensor interchangeability. If the OEM says lidar, IMU, or camera modules are “equivalent” across builds without a documented validation matrix, evaluators should ask whether SLAM behavior, obstacle detection, and safety margins were reverified after substitution. Mature sourcing organizations usually require change notification windows of 60 to 90 days for critical components.

Another warning sign is when charging infrastructure, BMS logic, and motor control electronics come from disconnected vendors with no unified fault reporting. This often produces fragmented accountability when thermal events, battery degradation, or field resets begin appearing after 9 to 12 months of operation.

Scenario 2: Outdoor mobility and harsh environments

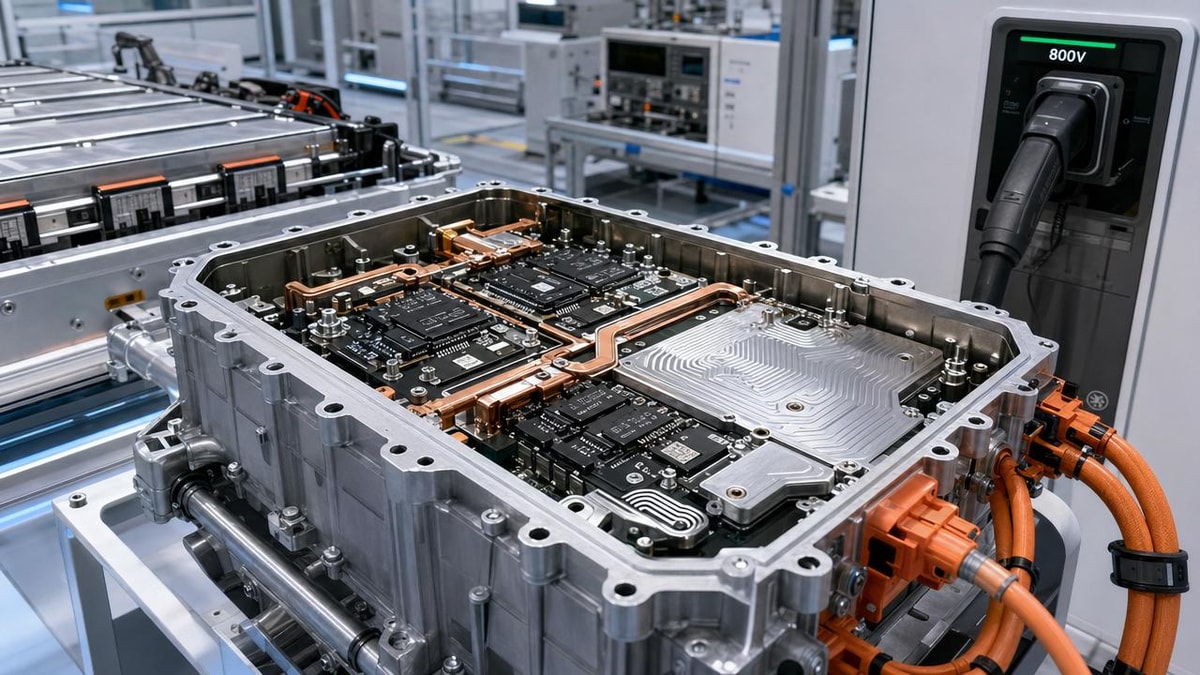

For outdoor autonomous systems, environmental resilience moves to the top of the checklist. Evaluators should examine thermal derating curves, enclosure contamination controls, connector sealing, and power semiconductor choices such as SiC or conventional silicon solutions where relevant. If the OEM cannot explain how the system performs across a realistic operating band, for example from -20°C to 55°C, the apparent sophistication of the platform should be treated cautiously.

This is also where semiconductor packaging and testing discipline become commercially important. Vibration-sensitive interconnects, unstable board-level assembly, or insufficient burn-in strategy may not fail in short acceptance tests. However, under repeated shock cycles and high-duty operation, weak process control can surface as intermittent sensing faults or power-stage instability.

An Autonomous Systems OEM targeting outdoor or industrial mobility should be able to discuss field return analysis, component derating, and environmental validation with precision. When responses remain marketing-oriented rather than test-oriented, business evaluators should slow the process and request evidence before proceeding.

Scenario 3: Industrial inspection and sensory infrastructure

Inspection robots, machine vision carriers, and sensory edge systems create a different type of risk: data that appears available but is not decision-grade. In these use cases, sensor fusion quality, calibration traceability, timing accuracy, and EMI resistance matter as much as mobility performance. If the Autonomous Systems OEM cannot show repeatable calibration procedures or explain how sensor drift is managed over 6, 12, and 24 months, the output may be operationally unreliable.

Another concern is hidden dependence on fragile upstream materials or process nodes. MEMS sensors, precision analog ICs, and specialized packaging may rely on a narrow supplier base. Evaluators should ask whether critical sensing components have alternate sources, what the qualification burden would be, and whether firmware compensation models are component-specific.

For businesses managing regulated infrastructure, there is also a governance angle. The OEM should maintain test records, lot traceability, and controlled revision history. If software and hardware changes cannot be linked to validation outcomes, the risk is not only technical but contractual.

How to Spot Common Risks Before Commercial Commitment

A practical Autonomous Systems OEM review should combine sourcing, engineering, and lifecycle questions. Too many evaluations stop at demo performance, unit pricing, and broad compliance statements. The stronger approach is to identify failure pathways before volume commitment, especially in programs with 12-month rollout plans or multi-site deployment targets.

The table below summarizes common risk categories that business evaluators encounter across autonomous systems procurement. It can be used as a pre-award checkpoint when comparing two or three shortlisted OEMs.

These categories are especially relevant where semiconductor reliability and sensory fidelity influence commercial outcomes. An Autonomous Systems OEM that cannot translate technical controls into lifecycle stability often creates downstream cost in commissioning delays, retrofit work, and service burden.

Checklist of red flags during RFQ and factory review

Commercial and operational warning signs

- Lead times are quoted as fixed numbers without a range, such as “8 weeks guaranteed,” despite volatile semiconductor categories.

- Critical components are described only at a family level, with no visibility into package version, fab source, or test status.

- Service SLAs are promised globally, but there is no named regional support structure or spare-parts stocking logic.

- Unit economics depend on future scale assumptions that are not supported by current manufacturing capacity.

Technical and quality warning signs

- Environmental test language is broad, but no test duration, pass criteria, or retest protocol is provided.

- Sensor performance is demonstrated with ideal datasets rather than scenario-relevant field data.

- Board revisions and firmware changes are frequent, yet change-control records are incomplete or informal.

- Thermal design claims are made without junction temperature discussion, derating rationale, or fault event logging.

When two or more of these signs appear together, evaluators should assume a higher implementation risk even if the OEM performs well in meetings. The purpose is not to reject every imperfect supplier, but to price risk correctly and request mitigation before contract signature.

Scenario-Based Qualification Strategy for Business Evaluators

An effective Autonomous Systems OEM selection process should reflect deployment scale, criticality, and operational exposure. A 10-unit pilot for a controlled environment does not justify the same diligence level as a 300-unit rollout across multiple facilities. Yet even smaller projects benefit from disciplined gates because component changes, calibration drift, and software update issues often emerge before scale.

For most buyers, a 4-stage approach works well: scenario definition, technical-commercial screening, evidence review, and limited field validation. This structure gives procurement teams enough depth to detect weak points without turning the process into a long engineering audit.

Recommended qualification path

- Define the operating scenario clearly, including duty cycle, temperature band, contamination level, network dependency, and expected service window.

- Identify the top 5 to 8 commercial risks, such as supply continuity, sensor accuracy retention, or maintenance response time.

- Request evidence tied to those risks, including test summaries, change-control practice, calibration process, and key component lifecycle status.

- Run a limited field validation over a realistic period, often 30 to 90 days, with agreed fault logging and acceptance criteria.

- Lock post-approval obligations such as change notification, spare support period, and requalification triggers.

This method is especially useful when comparing OEMs with different strengths. One supplier may offer superior perception software, while another provides stronger manufacturing traceability and more resilient semiconductor sourcing. Scenario-based scoring allows decision-makers to prioritize what matters commercially rather than what appears impressive in isolation.

What to ask by business type

Large enterprises usually need a broader governance package from an Autonomous Systems OEM: revision control, formal quality escalation, supply-chain disclosure, and multi-site service structure. Mid-sized firms may place higher weight on implementation speed, practical support, and lower engineering overhead. In both cases, evaluators should ask how the OEM handles component obsolescence over a 24 to 60 month planning horizon.

If your business depends on semiconductor-intensive performance, such as high-efficiency motor drives, edge AI modules, or sensor-dense inspection payloads, pay special attention to packaging, thermal pathways, and qualification consistency. These are the areas where hidden instability often translates into field failures that are costly to diagnose later.

Common Misjudgments That Lead to the Wrong Autonomous Systems OEM

One common misjudgment is treating prototype success as proof of supply readiness. A polished pilot can hide fragile sourcing, manual calibration steps, or unstable build consistency. If the OEM has not demonstrated controlled scaling from pilot to repeatable production, business evaluators should assume there may be an execution gap between the first 5 units and the next 100.

Another error is overvaluing certification language without checking scope. Standards such as SEMI, AEC-style expectations in component quality, or laboratory discipline aligned with ISO/IEC 17025 thinking can support credibility, but only if the OEM can explain what has actually been tested, by whom, and under what conditions. Broad references with no scenario linkage should not be mistaken for complete risk coverage.

A third misjudgment is ignoring second-order dependencies. In autonomous systems, performance is rarely determined by a single subsystem. A stable perception stack depends on sensor quality, timing integrity, board assembly discipline, power cleanliness, and enclosure conditions. A capable Autonomous Systems OEM should be able to discuss these interdependencies rather than speaking only in software or only in mechanics.

A short decision filter before final selection

- Can the OEM explain which components are single-source and what mitigation exists if lead times stretch beyond 20 to 26 weeks?

- Can it show how sensor accuracy is maintained over time, not just on day-one acceptance?

- Can it identify what changes would require revalidation in your specific deployment scenario?

- Can it support both immediate delivery needs and a longer lifecycle plan without depending on informal commitments?

If the answer is weak on several points, the issue is not necessarily that the supplier lacks innovation. The issue is that the fit may be wrong for your business scenario, risk tolerance, or deployment scale.

Why Choose Us for Autonomous Systems OEM Evaluation Support

At G-SSI, we approach Autonomous Systems OEM selection through the industrial realities that shape long-term success: semiconductor reliability, sensor integrity, packaging quality, environmental resilience, and supply continuity. This is valuable for business evaluators who need more than a vendor pitch and want structured support in comparing technical claims against procurement risk.

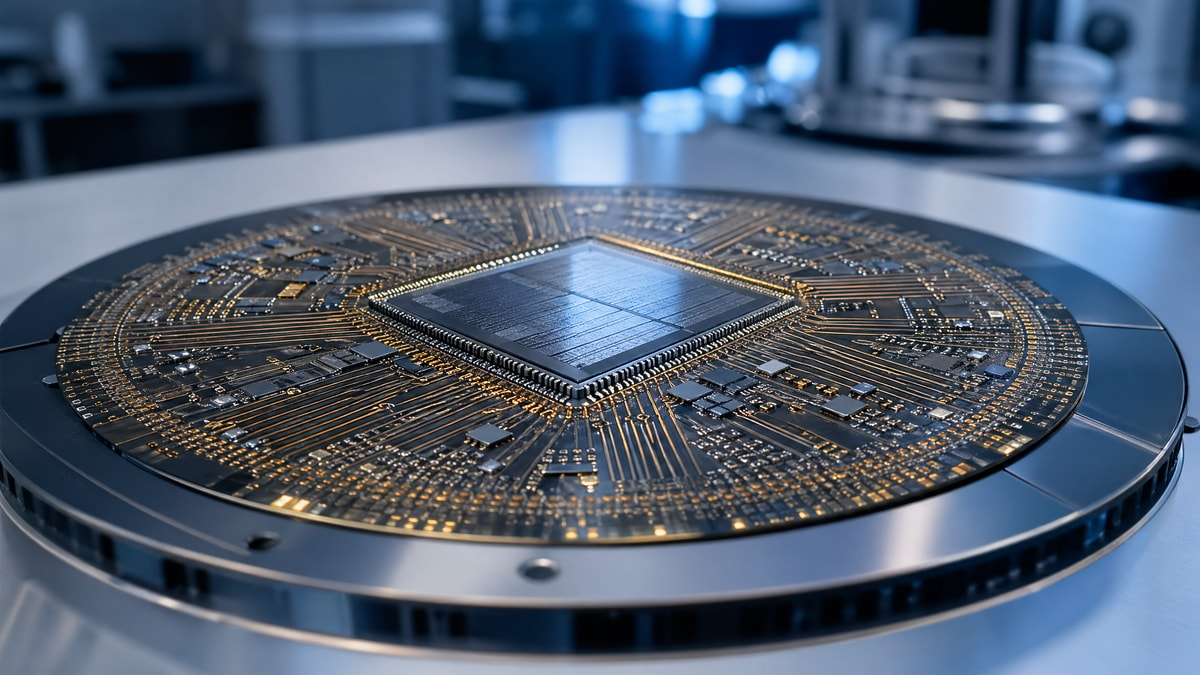

Our perspective is built around five critical pillars of the silicon value chain, including power semiconductors, advanced packaging and testing, industrial-grade MEMS and smart sensors, high-purity electronic materials, and fabrication environment control. That enables more disciplined assessment of issues such as SiC or conventional power-stage suitability, calibration governance, thermal margins, component substitution risk, and quality documentation expectations.

If you are screening an Autonomous Systems OEM for warehouse automation, industrial inspection, outdoor mobility, or sensor-intensive infrastructure, contact us for focused support. We can help you confirm application parameters, compare candidate architectures, review delivery-cycle exposure, discuss certification expectations, assess sample and pilot needs, and structure quotation discussions around real operational risk rather than assumptions alone.

Get weekly intelligence in your inbox.

No noise. No sponsored content. Pure intelligence.